Each generation of AMD RDNA architecture updates has major changes in technology. The new generation of RDNA 3 architecture will adopt MCM multi-chip packaging, and use WGP as the main computing module. It is rumored that Infinity Cache may also turn to the design of a 3D stack.

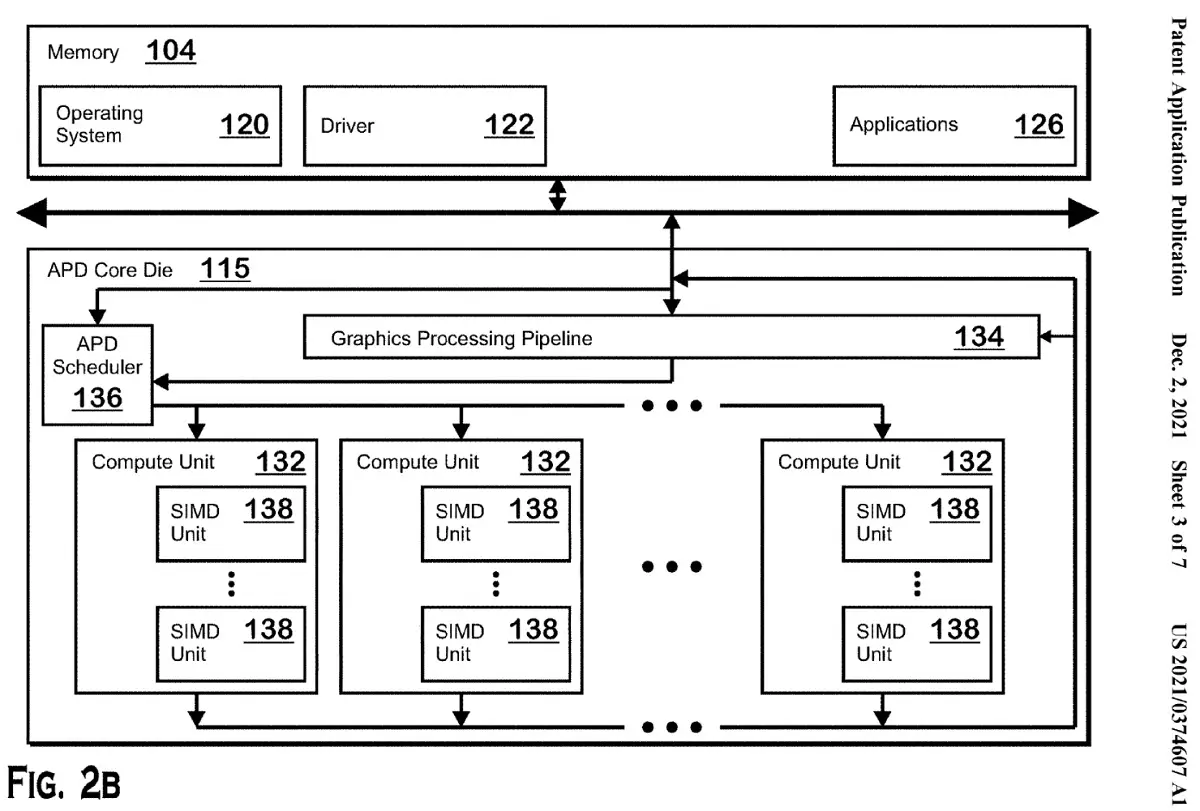

Recently, a new GPU patent of AMD was discovered. Perhaps in the next generation of RDNA architecture GPU, a machine learning (ML) stacked accelerator chip will be added, called APD (accelerated processing device). The memory in the APD can be used as the cache of its chip, or it can be directly used to perform machine learning accelerated operations, such as matrix multiplication. If the shader task is performed on the APD, a group of machine learning arithmetic logic units will be instructed to perform related tasks through one or more inter-chip interconnections.

This AI/ML core may be AMD’s response to the Nvidia Tensor core, and it may be able to hand over certain tasks of the GPU to the APD to improve performance and at the same time for the work efficiency of HPC or deep learning. Compared with Nvidia’s investment in artificial intelligence and deep learning, AMD’s GPU is more focused on traditional computing, even the latest Instinct MI200 series of computing cards. Unlike Nvidia, which integrates machine learning functions into the GPU, AMD implements it through a dedicated module.